Mark J. Nelson (2016). Investigating vanilla MCTS scaling on the GVG-AI game corpus. In Proceedings of the IEEE Conference on Computational Intelligence and Games. DOI: 10.1109/CIG.2016.7860443

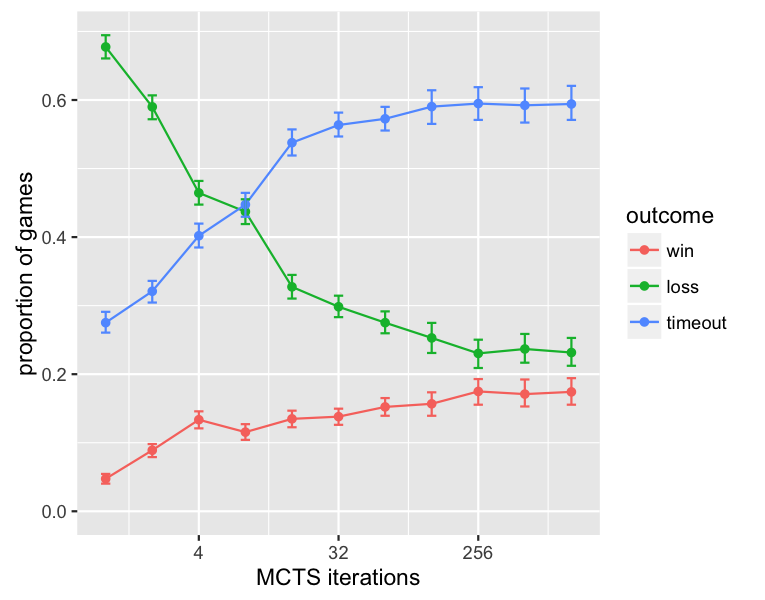

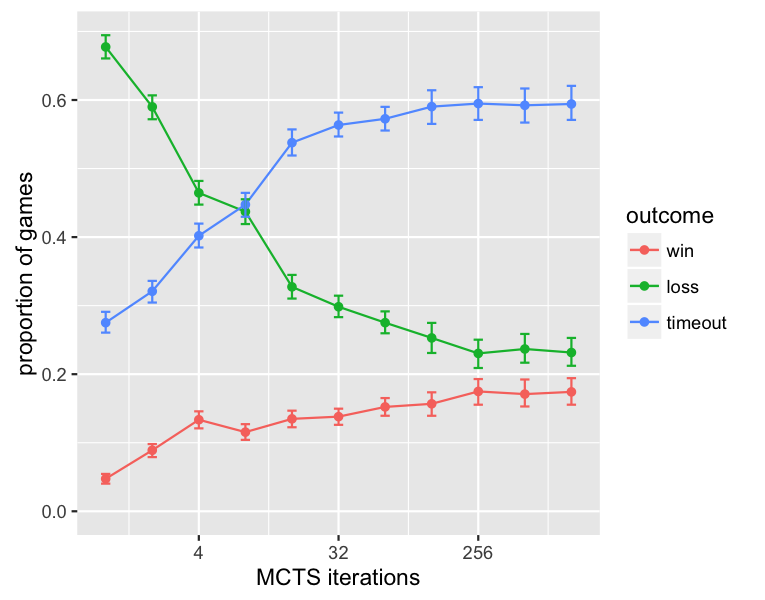

The General Video Game AI Competition (GVG-AI) invites submissions of controllers to play games specified in the Video Game Description Language (VGDL), testing them against each other and several baselines. One of the baselines that has done surprisingly well in some of the competitions is sampleMCTS, a straightforward implementation of Monte Carlo tree search (MCTS). Although it has done worse in other iterations of the competition, this has produced a nagging worry to us that perhaps the GVG-AI competition might be too easy, especially since performance profiling suggests that significant increases in number of MCTS iterations that can be completed in a given time limit will be possible through optimizations to the GVG-AI competition framework. To better understand the potential performance of the baseline vanilla MCTS controller, I perform scaling experiments, running it against the 62 games in the public GVG-AI corpus as the time budget is varied from about 1/30 of that in the current competition, through around 30x the current competition's budget. I find that it does not in fact master the games even given 30x the current time budget, so the challenge of the GVG-AI competition is safe (at least against this baseline). However, I do find that given enough computational budget, it manages to avoid explicitly losing on most games, despite failing to win them and ultimately losing as time expires, suggesting an asymmetry in the current GVG-AI competition's challenge: not losing is significantly easier than winning.

Back to publications.